Gasgoo Munich- A shadow hangs over Nvidia.

On April 30, Nvidia CEO Jensen Huang sat down with the Special Competitive Studies Project (SCSP) and delivered a line destined for endless replay: "Our market share in China's AI accelerator business is now basically zero."

That remark was quickly dissected across the tech press and investment circles, sparking sharply different reactions. Some saw a "victory" for U.S. export controls; others saw a crisis where China's AI computing power would be "choked off." Still others sensed the signal for an explosion in domestic alternatives.

To be clear, Huang was referring to Nvidia's "direct sales" to China approaching zero—not a total disappearance of Nvidia chips from the market.

Yet that subtle shift is enough to rip open a structural restructuring now sweeping through China's semiconductor supply chain. From data centers to auto plants, from GPUs to MCUs, and from Wall Street to domestic stock exchanges, everyone is recalibrating their position around this "near-zero" reality.

Behind the "Near Zero": The End of Legal Channels, and Who Fills the Void?

According to Bernstein Research, Nvidia held roughly 66% of China's AI GPU market in 2024. If Huang's assessment holds, that figure plummeted from two-thirds to near zero in under two years—a pace far outstripping any model prediction. Bernstein's most pessimistic forecast had previously pegged the drop at around 8%. Reality, however, has proven harsher.

Driving this is a dramatic escalation in U.S. chip export controls over the past year, marking a qualitative shift in how restrictions are applied. In April 2025, new measures effectively barred Nvidia's China-specific H20 chip and AMD's MI308 from the market. By July, Nvidia announced it would resume H20 sales, with the U.S. government promising a license. Two days after Nvidia's CEO visited the White House to meet with Trump in August, that license was swiftly approved. But the conditions were unprecedented: a requirement to hand over 15% of the revenue from these chips to the U.S. government.

Then, in December 2025, Trump announced that Nvidia would be permitted to export the higher-end H200 to China—on the condition that the U.S. government take a 25% cut of sales.

Trade compliance experts have a precise name for this model: "transactional containment."

The United States is no longer pursuing a total technology blockade—that has proven too costly and full of loopholes. Instead, it has turned chip exports into a bargaining chip that can be renegotiated and escalated at any time. The unpredictable approval cycles for licenses, the room for negotiation over attached conditions, and the shifting political winds make large-scale, compliant commercial exports effectively unfeasible.

Nvidia's direct sales to China have transformed from a business with stable expectations into a "hostage" that might be traded away at any moment in a diplomatic game.

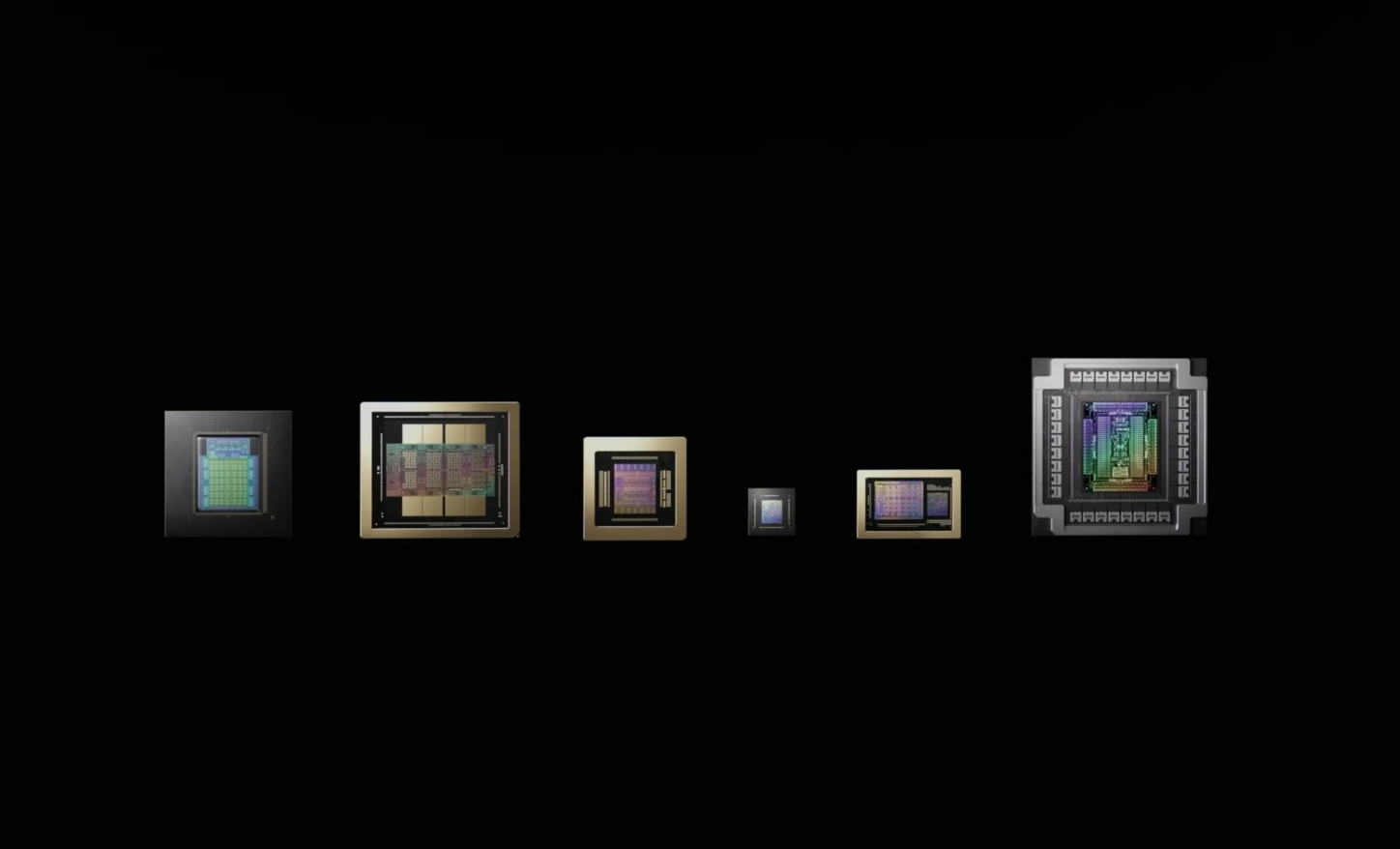

Image source: Nvidia

Yet China's vast demand for computing power won't evaporate due to policy restrictions; it will simply find new channels.

There are at least three alternative paths: gray-market channels via third-country transshipment, Chinese companies deploying computing power overseas and routing it back, and—crucially—large-scale substitution by domestic AI chips.

Third-party research data suggests that by 2026, excluding self-developed chips from internet giants, the total supply of domestic high-performance AI inference chips is expected to reach around 3 million units. The first-tier trio of Huawei Ascend, Cambricon, and Hygon will contribute roughly 1.6 million to 1.7 million of those, while second-tier players like Maxxiri and Biren will supply nearly 1 million. A domestic alternative supply chain is taking shape far faster than outsiders expected.

Jensen Huang knows this well. In the same interview, he noted that even without advanced U.S.-developed AI GPUs and software stacks, "China is a competitor that cannot be ignored in the frontier AI model space," adding that the country's pool of scientists and mathematicians is a "national treasure." The essence of these remarks is not praise for China's AI, but a warning to U.S. policymakers: the more you blockade, the faster your rival learns to build its own.

There is really only one question worth asking: of the "direct sales share" Nvidia has lost, how much has flowed to domestic chips, and how much to gray channels?

There is no precise answer yet, but one basic judgment holds: the Chinese market is switching operating modes. And that switch is sending violent ripples through the downstream supply chain.

The Chip Crisis Spreads to Wheels: Who Pays the Cost?

While the "near zero" figure Huang spoke of is being chewed over in the tech media, another group is feeling the weight of chip costs in cold, hard cash.

They are from the automotive industry.

At the China EV 100 Forum in April 2026, NIO Chairman William Li Bin dropped a striking set of numbers: battery and chip costs now account for more than 50% of the total cost of a smart electric vehicle. For automakers, that 50% is essentially in a state of being "out of control."

Take the NIO ES9 as an example: a single vehicle uses over 1,000 types of semiconductor part numbers and more than 4,000 chips. Chips with identical functions become different part numbers due to varying suppliers or procurement batches, driving management complexity up exponentially. NIO is currently working on standardization, aiming to slash that to 400 part numbers.

Li revealed that NIO used Nvidia chips exclusively in previous years, with peak purchases reaching $300 million worth of Nvidia chips annually.

"If supply and demand get misaligned twice for a single model, wasting hundreds of millions is normal," Li said bluntly. "The manufacturer doesn't earn it, the supply chain doesn't earn it, and the user doesn't benefit—it just goes to waste."

"Out of control" captures the true predicament automakers face with the chip supply chain. Yet the root of this loss of control doesn't lie within the auto industry itself.

As global demand for AI computing power explodes and data centers snatch up advanced chip capacity "at any cost," chip procurement for the auto industry is being systematically squeezed.

Industry surveys show that since the start of 2026, the semiconductor sector has been hit by a wave of price increases spreading from memory to the entire supply chain. Numerous companies have issued price hike notices, generally ranging from 10% to 20%, with some categories reaching 50% to 80%. This covers MCUs, analog chips, memory, power devices, image sensors, and more.

In April this year, reports surfaced that Intel and AMD had notified clients of price increases across their entire server CPU lines, with rises generally between 10% and 15% since February. AMD has repeatedly emphasized that its 2026 CPU capacity is completely sold out, and it expects annual growth in its data center business to exceed 60% over the next three to five years, with no signs of demand slowing.

At the same time, the demand side for computing power is experiencing an exponential explosion.

Liu Liehong, head of China's National Data Bureau, disclosed a set of figures: in early 2024, daily token usage in China stood at 100 billion; by the end of 2025, it had leaped to 100 trillion; and this March, it surpassed 140 trillion—a more than thousand-fold increase in two years.

On one side is the "at any cost" buying spree by data centers; on the other is the explosive growth of large model inference demand. Where these two curves intersect, the result is singular: the automotive industry is facing a "computing power squeeze-out."

Cao Guangping, a partner at Chafu Consulting, offered a clear judgment in an interview with Gasgoo. "Looking at the vehicle side of smart EVs, cars in the intelligence era are essentially working for battery cell and chip companies, which indeed makes profitability difficult," Cao noted, pointing out that automakers like BYD have already announced price hikes due to rising costs of intelligent components.

He defines this as "both a short-term adjustment and a long-term trend," as vehicle products will undergo long-term intelligent upgrades from L2 to L5. Demand for chip quantity, functionality, and performance will remain on a long-term growth trajectory. "Automakers without an intelligence advantage will at least face elimination."

Under this survival pressure, in-house chip development by automakers is no longer a technological exploration—it is supply chain self-preservation.

Those automakers that moved first are now seeing returns.

Li revealed that NIO's cumulative production of self-developed chips has exceeded 550,000 units, and he expects the localization rate of automotive semiconductors to reach 35% to 40% by 2027. On an earnings call in March, he further stated that the second advanced smart chip from Shenji, targeting a broader customer base, has successfully taped out and entered mass production.

In the same month, XPENG Chairman He Xiaopeng disclosed that since mass production and installation began in the third quarter of 2025, cumulative shipments of the Turing chip have exceeded 200,000 units. Starting in the second quarter of this year, the entire model lineup will switch to self-developed chips, with a full-year shipment target approaching 1 million units.

Image source: XPENG official website

Li Auto's in-house Mach 100 chip will also go into mass production and be installed in vehicles in the second quarter. Even traditional automakers are joining the fray; China FAW has partnered with industry players to develop the "Hongqi 1," an automotive-grade, advanced-process, multi-domain fusion chip.

This represents a divergence between the "full-stack control" faction and the "supply chain integration" faction.

Tesla, NIO, Li Auto, and XPENG pursue the ultimate coupling of algorithms and hardware, seeking to control the pricing power of intelligent driving. Most traditional automakers, meanwhile, rely on mature third-party platforms for rapid mass production. But regardless of the path, the logical starting point is the same: chip costs cannot remain "out of control."

It is worth noting that the computing power requirements for automakers' self-developed chips are mostly concentrated in mid-to-low-end process nodes for inference and edge computing scenarios, rather than the AI training scenarios where Nvidia excels.

This means that in-house development by automakers is not about challenging Nvidia's dominance, but about finding a pragmatic path to bypass the pain points of being "choked." Together with data-center-level domestic chips like Huawei Ascend, Cambricon, and Hygon, they form a landscape of multi-layered substitution for Nvidia's market share.

Price Hikes, Surges, and "Survival of the Fittest": What Domestic Chips Are Going Through

Capital markets are the most sensitive.

On May 6, Hygon Information, the leader in A-share computing chips, hit the 20% daily limit up, closing at a record high with a total market capitalization exceeding 820 billion yuan—up nearly 60% this year. Just days earlier, on April 30, Cambricon also hit the 20% limit up to close at 1,699.96 yuan per share, reclaiming its title as the A-share's highest-priced stock with a monthly gain of over 62%.

Behind the soaring stock prices is hard support from delivered earnings. Cambricon's first-quarter revenue for 2026 was 2.885 billion yuan, up 159.56% year-on-year; net profit attributable to shareholders was 1.013 billion yuan, up 185.04% year-on-year and up 122% from the 455 million yuan in the fourth quarter of 2025. Domestic chip makers, once criticized as "cash burners," have finally crossed the critical inflection point from loss to profitability.

Two core engines are driving this explosion.

First is the "vacuum market" created by export controls. TrendForce data estimates that China's overall high-end AI chip market will grow by more than 60% in 2026, and local chip design firms with growth potential are expected to expand their market share to around 50%. CITIC Securities, meanwhile, predicts that the domestic AI chip market will surpass 300 billion yuan this year.

Second is the real explosion on the demand side. Domestic AI large models already occupy six of the top ten spots globally, and domestic computing demand is shifting from "cloud training" to a dual-drive of "training plus inference." 2026 is viewed by the industry as year one for AI application implementation, with consumer-side traffic and business-side vertical models jointly driving a massive increase in inference computing consumption.

But as with any industry experiencing explosive growth, the dark corners must be looked at squarely beneath the glare of the numbers.

Image source: Nvidia livestream screenshot

The rise of domestic chips is not a straight line. Cao Guangping offered a four-dimensional analytical framework: the advantages of domestic chips are concentrated in market demand, policy drivers, rapid product iteration by hardware companies, and improvements in software stacks and code replacement. But the bottlenecks are equally prominent. "Constraints in high-end processes, a gap in chip computing power, and disparities in integration capabilities and the industrial ecosystem," Cao said.

This judgment aligns closely with widespread industry observation. On the hardware level, the gap between domestic chips and the leading international standard has narrowed to one or two generations, but in the software ecosystem, the gap is actually wider. The developer stickiness and toolchain barriers built by the CUDA ecosystem are still difficult to fully break through in the short term. Currently, domestic chips are making faster progress in adapting to inference scenarios, but in large model training, Nvidia's ecosystem remains dominant.

Additionally, the current wave of chip price hikes is a double-edged sword. On one hand, rising prices mean a supply-demand balance favorable to suppliers, and the pricing power and profits of domestic chip makers are expanding—"computing power inflation" has become an industry consensus. But on the other hand, as price increases for CPUs, memory, and MCUs are passed downstream, the pressure on terminal industries like automotive and consumer electronics is constantly accumulating.

Regarding how automakers can find a way out under this pressure, Cao offered a pragmatic judgment: "Strategic cooperation with chip companies or self-developed chips is almost an inevitable path. And this must be based on automakers' current product profitability and scale levels. To this end, automakers need to find their unique advantages in chip production, development, and application, and adopt a 'multi-channel' procurement strategy combining self-supply and external sourcing to ensure that the improvement of product intelligence does not stall."

As William Li said, chip costs are "out of control." And this loss of control will not disappear on its own; it will only shift between different links of the supply chain: from foundries to chip design companies, from automakers to consumers. Every shift means a reshuffling of the interest landscape.

Conclusion

Jensen Huang stated bluntly that Nvidia's business in China is currently almost completely stalled.

This is actually a cross-section of a structural restructuring of the global semiconductor supply chain. The trend it reveals is clear: export controls have not "contained" China's AI; they have simply altered the supply structure of China's AI chips. It has shifted from a single source heavily dependent on Nvidia to a diverse ecosystem composed of Huawei Ascend, Cambricon, Hygon, self-developed chips from internet giants, and self-developed chips from automakers.

This ecosystem is far from mature; software adaptation still takes time, and training scenarios remain a weak point. But its direction is now irreversible.

At the end of the interview, Huang warned that narratives of threat and export controls might slow down AI deployment on a macro level. Long-term leadership, he argued, should not rely on restricting global competitors, but on "ensuring that the U.S. AI ecosystem dominates globally."

"Zero" is an endpoint, but even more so, a starting point.

It ends the old order where Nvidia reigned supreme and Chinese customers waited in line for goods. It opens a long contest involving technology roadmaps, industrial security, and commercial maneuvering.

The outcome of this contest will not depend on a single sentence from anyone, but on who can truly bridge the gap from "usable" to "excellent."