Gasgoo Munich-By 2026, a defining signal is emerging in the Chinese auto market: intelligent driving is no longer an option—it is becoming the car's "soul."

This isn't just marketing rhetoric; the data backs it up. NOA user activity is climbing faster than expected, and automakers' intelligent driving systems are permeating from the functional layer to the experiential, and even emotional, level.

But the flip side is that forging this "soul" is far harder than imagined.

During the 2026 Intelligent Electric Vehicle Development Forum, She Shidong, Great Wall Motor's deputy general manager of intelligent products, admitted that the integration of large models into smart cockpits has "just passed the starting line"—lagging far behind the internet industry's rapid iteration.

A harsher reality looms: as automakers battle fiercely for entry into the large-model era, the industry realizes the real watershed isn't computing power, algorithms, or parameter scale. It lies in whether an automaker possesses its own independent intelligent system capability.

The Homogenization Trap Has Arrived

An awkward truth has surfaced: cockpit interaction has become highly homogenized.

Different brands are presenting nearly identical interfaces—3D car models, wallpaper desktops, layered navigation and driving screens, fixed dock bars, and a crowded ecosystem of apps.

"We fed over 200 different interaction interfaces on the market into a large model, and it concluded the similarity was over 95%," She revealed. The entire industry has entered a "very painful situation"—where automakers find they have "no rice to cook" when trying to create something new.

Image Source: Great Wall Motor

This isn't just Great Wall's problem.

From Huawei's HarmonyOS Cockpit to BYD's DiLink, XPENG's Xmart to Li Auto's LiOS, the underlying logic of these cockpit OS systems is converging, and their function matrices overlap heavily. The differences lie only in UI design, voice assistant persona, and the sheer volume of apps.

When competition degrades into "whose screen is bigger" or "who has more apps," the dividends of this track have run dry.

Yet, a new variable is emerging: large models are shifting from "backend tools" to "system kernels."

She outlines this evolution in three phases: from 2022 to 2023, large models intervened superficially as "content generators"—chatbots, wallpaper creators, route planners—a stage he calls the "post-positioned large model."

Then came "voice agents" capable of context understanding and memory.

By the second half of 2025, as Tesla deploys Grok in North America and domestic rivals race for premieres, in-vehicle large models are evolving into "conversational native entry points."

This is a critical turning point. When the large model is no longer an add-on feature but the cockpit's underlying architecture, the logic of competition changes completely. It is no longer about "how many apps you have," but "how well you understand the user."

However, this path is littered with challenges traditional automakers have never faced before.

She listed three core difficulties in the interview:

First, insufficient edge computing power. Mainstream automotive chips currently offer 50 to 60 TOPS, enough only for small models under 1 billion parameters. This creates a generational gap in cognitive ability compared to giants like Doubao or Qianwen.

Second, a lack of mature solutions for cabin space modeling. Neither the automotive nor the internet industry has solved the problem of "cabin spatial relationship modeling."

Third, automakers' utilization of their own automotive know-how—such as optimal air conditioning strategies for different scenarios—"lags far behind the internet industry."

In short, traditional automakers hold the richest automotive data but are the least skilled at feeding it to AI. This constitutes the core contradiction in the intelligent driving sector today.

The Three-Game Battle for In-Vehicle Large Models

If we deconstruct the 2026 intelligent driving competition into three battlefields, we see AI rewriting the rules of each.

The first front is the algorithm war between end-to-end and VLA models.

From BEV+Transformer multi-stage solutions to single-stage end-to-end, and then to VLA (Vision-Language-Action) models, the technical roadmap has iterated three times in just two years.

Li Auto released its VLA driver model early this year and announced a pivot to an "embodied intelligence enterprise," while the IM LS8 became the world's first vehicle equipped with the Qianwen large model. The speed of algorithmic evolution means the industry barely has time to digest one generation before the next arrives.

Image Source: Momenta

But She offers a sobering assessment: "Core algorithms aren't actually the hardest part of the intelligent driving engineering... the truly hardest part is the full-link integration and collaboration."

Using Great Wall as an example, after seven or eight years of proprietary R&D, the reuse rate of its engineering and data training chains is nearing 80% to 90%. The code value of the core algorithms, he notes, is "worth maybe 100 to 200 million" in the industry. The real differentiator is systems engineering capability: data labeling, model training, and evaluation systems.

The subtext is clear: the algorithm gap is closing fast, but "engineering capability" is the true moat. The winners will be those who can deploy intelligent driving systems across vehicles ranging from 50 to 700 TOPS while maintaining a consistent user experience.

The second front is the arms race in edge computing power.

By 2026, computing power in high-end smart vehicles has jumped from the previous generation's 200 TOPS to over 700 TOPS. Great Wall's Guiyuan platform features Nvidia's Thor chip, delivering over 700 TOPS for the driving domain and 300 TOPS for the cockpit domain—totaling roughly 1,000 TOPS.

She likens these two domains to the "left brain" (driving) and "right brain" (cockpit), equipped with VLA and spatial VLA models respectively, forming the brain of the vehicle agent.

But the end of the computing race isn't about stacking chips; it's about deployment efficiency. How do you deploy a sufficiently smart model within strict power and thermal limits while ensuring millisecond response times? This is both a technical and a cost challenge.

BYD's move to bring LiDAR to the 100,000-yuan price class proves cost reduction is possible, but doing so without sacrificing the intelligent experience remains an unsolved puzzle.

The third front is the battle for ecosystem choice.

By 2026, nearly every major large model player has entered the automotive space—Baidu's Wenxin, Alibaba's Qianwen, ByteDance's Doubao, Huawei's Pangu, and even Tesla's Grok.

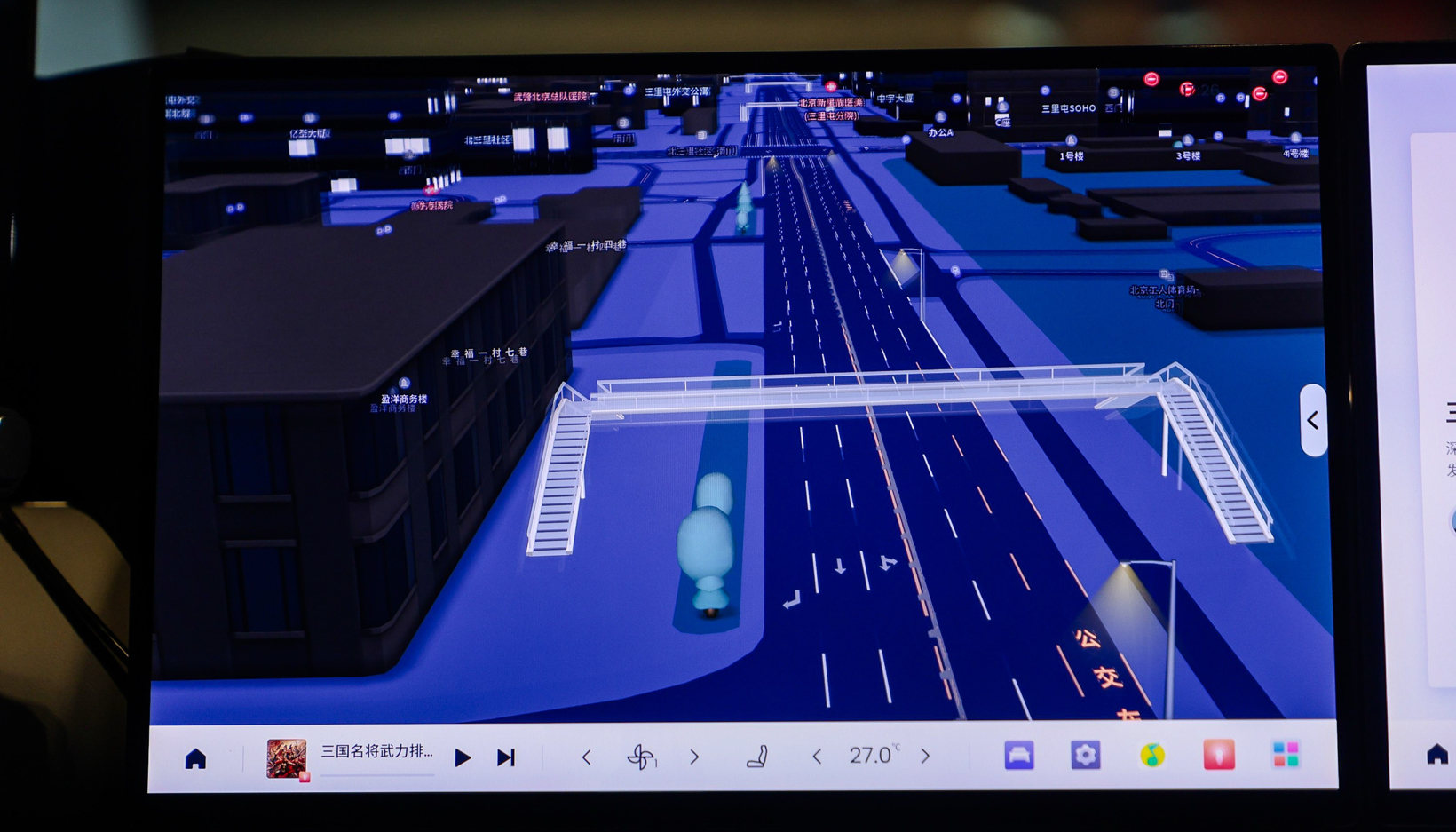

Image Source: NavInfo

For automakers, choosing a partner is not just a technical selection; it is a struggle over ecosystem binding and control of the user entry point.

She stated clearly that Great Wall will not rely entirely on any single supplier. "It's not a total replacement, but a gradual permeation, where we can co-create specific algorithms with the industry."

He revealed that Great Wall will use deep proprietary R&D for flagship products, gradually switch to self-developed solutions for "inclusive intelligence" (the 100 to 200 TOPS range), and remain open to excellent industry solutions for "mid-tier" and "high-end" models.

The logic here is that an intelligent driving system is not a one-time product but a service requiring continuous evolution. If the core chain is held by a supplier, the automaker loses control over the iteration rhythm—which She believes is the biggest trap for traditional automakers in transition.

Redefining "The Car That Knows You"

Beyond the technical rivalry, a deeper shift is underway: the relationship between human and car is being reconstructed by AI.

She proposed a concept: the "Human-Agent-Machine" cockpit agent interaction paradigm.

The traditional interaction is a "human-machine" binary, where users directly operate buttons or issue voice commands. In the new paradigm, an agent ("Intelligence") acts as an intermediary. Users no longer face the "Body" (controls and ecosystem) directly but converse naturally with the agent, which then calls upon vehicle functions.

This sounds abstract, but the experience is disruptive.

Image Source: Tesla

She gave an example: when three people are in the car and a user wants to turn on the seat heater, the traditional way is to give a precise command: "Turn on the second-row right seat heater."

In the "Human-Agent-Machine" mode, the user just says, "Turn on the seat heater." The agent automatically identifies the speaker, their location, whether windows need closing, if vents are aimed correctly, and the user's body type—then executes the optimal strategy.

This isn't an improvement in voice recognition; it is a complete reconstruction of interaction logic. The shift from "human adapting to machine" to "machine adapting to human" represents a fundamental change in cockpit engineering.

Data from Great Wall's nearly 10 million connected users shows human-car interaction frequency is only about 4 to 5 times per hour—a very sparse process.

This means the value of a smart cockpit isn't in offering more operable functions, but in providing service at the exact moment the user needs it, in the most natural way possible.

She divides the evolution of Great Wall's agent into four stages—"Acquaintance, Understanding, Love, Companionship"—corresponding to a progressive journey from recognizing the user to understanding habits, proactive service, and full-time companionship.

But realizing this vision faces real-world constraints.

She admitted that the current AI-powered cockpit has "just passed the starting gun" and is far from being able to recommend a "beautiful lifestyle." Using a marathon metaphor: "We likely still have a long way to go to complete the full course."

A notable trend is that Great Wall is not alone.

IM Motors' IM Ultra Agent, Baidu Map's AI cockpit agent, and Li Auto's VLA driver model are all evolving toward "vehicle agents." While routes differ, the direction is consistent: using AI to unify cockpit and driving, connecting the user and the entire vehicle through a single agent.

This means the industry's competitive focus is shifting from "feature stacking" to "experience depth." Whoever makes the user feel "this car truly gets me" wins the ticket to the next stage.

Another profound change is happening at the "personality" level.

When asked about his famous views—that "Tesla's FSD feels like sightseeing mode" and that "users were unhappy the Tank 400 was too gentle"—and pressed on whether "personality is more important than capability limits," She responded.

In the agent era, he said, differentiated experiences across scenarios are "absorbed" by the agent. The agent automatically finds the optimal balance between comfort, efficiency, and safety based on the context. Users just need to express "how to make me more comfortable and relaxed," without worrying about how the underlying system achieves it.

This kind of "personality" differentiation is precisely where traditional automakers have the best chance to build a moat.

Every automaker has a different user base, brand DNA, and scenario data. When these differences are fed into AI models, every automaker can theoretically train a unique agent.

Huawei's driving prioritizes safety redundancy and full-scenario coverage; XPENG focuses on commuting efficiency; Li Auto leans toward family-friendliness; and Great Wall's driving carries an off-road gene. These differences aren't tuned via algorithm parameters but are "fed" by millions of kilometers of real-world data.

In this sense, the true value of bringing large models into cars isn't making all vehicles equally smart, but making each one unique.

In Conclusion

Looking back at Great Wall's intelligent transformation, She divides it into three phases:

Phase One (2021-2023) solved platformization, unifying over 240 software versions to enable OTA iteration. Phase Two (2023-2025) focused on user co-creation, organizing over 1,000 user communications and 60-plus 48-hour follow-up visits, achieving over 90% OTA user satisfaction. Phase Three (2025-present) enters the AI agent stage, reconstructing the user experience via the "Human-Agent-Machine" paradigm.

The evolution of these three phases is essentially a complete metamorphosis for a traditional automaker: from "selling hardware" to "selling software" to "selling experience." This path applies to almost all traditional automakers undergoing intelligent transformation.

She said something profound in the interview: "You cannot hand over the connection with users to a supplier." This is perhaps the sharpest reminder for the entire industry.

As AI reshapes the automotive sector, technology is never the biggest obstacle. The real trap lies in whether you are willing—and able—to face users directly. Because when the agent becomes the sole intermediary between the user and the car, whoever owns that intermediary owns everything.