Gasgoo Munich- PsiBot and Guanglun Intelligent recently announced funding rounds of 2 billion yuan and 1 billion yuan, respectively.

As two newly minted "unicorns," Guanglun Intelligent is targeting the simulation and data infrastructure that powers the physical AI ecosystem. PsiBot, meanwhile, is tackling the challenge of dexterous manipulation, using a proprietary data collection engine to slash acquisition costs to a fraction of the norm. In essence, both companies are zeroing in on the foundational layer of data infrastructure.

With robot hardware proliferating, why is capital suddenly pouring heavily into the sector's "water sellers"?

The answer lies in a growing industry consensus: data is becoming the Achilles' heel of embodied intelligence.

If algorithms are the robot's "brain" and hardware its "skeleton," then data is the "blood" flowing through it. Without that blood, the brain's commands never reach the limbs, and feedback from the limbs never returns to the brain—paralyzing the entire system.

As embodied intelligence moves rapidly from proof-of-concept to large-scale deployment, the industry's main axis of competition is quietly shifting from a "hardware showcase" to a "data war."

Data Hunger: The Growing Pains of Embodied Robotics

In the world of AI, all intelligence stems from the "feeding" of data.

The emergence of large language model capabilities over the past few years was built on a massive foundation of internet text. Similarly, for embodied robots to achieve true "generality," they must drive their "brains" with vast amounts of data.

"Many teams assume that if an embodied model fails to train, the bottleneck lies in the training phase itself," Ding Yan, CTO of Luming Robotics, said earlier when discussing the importance of embodied data. "In reality, most problems are planted at the very start of data generation. Piling on more models or compute power later only accelerates the wrong inputs."

Moreover, for embodied intelligence, larger scale and higher quality directly translate to stronger model generalization and operational precision. Without data, even the most advanced algorithms and precision hardware are hollow shells without a soul.

Yet, unlike large language models, which can mine data from the internet at low or zero cost, the data required for embodied intelligence is far harder to acquire at scale due to its unique nature.

Image Source: PsiBot

First, there is the complexity of data modalities.

Unlike large language models, embodied intelligence requires multimodal data generated by robots interacting with the physical world. This goes beyond images and video to include real-time feedback from force, tactile, and auditory sensors, as well as the robot's own kinematic and dynamic parameters. The synchronous collection and annotation of such multidimensional data are far more complex than processing text or static images alone.

Second, there is the openness and diversity of application scenarios.

Embodied robots must navigate diverse 3D spaces ranging from homes and factories to malls and the outdoors. Their interactions involve both static objects and dynamic subjects like humans and other living beings. Physical interaction methods, object attributes, and environmental features vary wildly across scenarios. Factors like material texture, shape, lighting conditions, and even minor disturbances can significantly impact the data, causing the difficulty of collection, annotation, and processing to grow geometrically.

For example, teaching a robot to simply unscrew a bottle cap might require hundreds or thousands of attempts and recordings under varying lighting, bottle types, and grip strengths. Each attempt demands professional equipment and human coordination.

Third, there is the closed-loop sequential nature of data.

Like autonomous driving, embodied intelligence requires continuous closed-loop sequences of "state-action-new state." Since every robot action alters the environment, the model must learn to adjust its next move based on the new state. This means data collection must record not just actions, but simultaneously track environmental changes and decision-making processes, driving technical difficulty up exponentially.

Finally, the strong coupling between data and hardware remains a critical bottleneck constraining the development of embodied data.

Image Source: AgiBot

Embodied data suffers from a "data follows the hardware" phenomenon. Differences in sensor layouts and algorithms across robot models often result in mutually incompatible data formats. For instance, assembly data from a factory production line cannot be directly migrated to a home service scenario. Furthermore, variations in hardware parameters across different brands and models exacerbate poor data compatibility.

He Han, a member of the National Committee of the Chinese People's Political Consultative Conference (CPPCC), previously stated bluntly that domestic research institutions and companies are currently fighting their own battles regarding data collection platforms, sensor interfaces, and data formats. This has created numerous "data islands." This fragmented state makes data difficult to share and reuse, leaving the industry without widely recognized, high-quality, large-scale open-source datasets—a reality that severely hampers technological progress.

Even if the collection hurdle is cleared, subsequent data cleaning and annotation remain deep pits to navigate. First-person video must be dissected into atomic action segments; force data needs temporal alignment; 3D point clouds require pose annotation. Each of these tasks consumes significant human labor and time.

The current reality is that existing annotation tools focus mostly on static images or simple video, struggling to efficiently support the demands of VLA models for long-sequence, 3D spatial, and physical dynamic annotation.

Due to these multiple challenges, the embodied intelligence industry faces a massive data gap. According to data from CSDN, a prominent Chinese IT technology platform: embodied intelligence requires hundreds of petabytes of physical interaction data, yet the current shortfall exceeds 99%.

With such a significant data chasm, data collection is no longer just a nice-to-have auxiliary task; it is a decisive battle for the industry's next stage. Specifically, figuring out how to establish data pipelines that are low-cost, high-quality, and highly efficient has become the critical pass that embodied intelligence must cross to move from the lab to the real world.

Four Schools of Thought Chase the Embodied Data "Gold Mine"

There is no doubt that in the realm of embodied intelligence, data is becoming the key anchor for winning the next phase of competition.

Drawing parallels with the technological evolution of autonomous driving, it is easy to predict that in the embodied intelligence race, whoever first cracks the "collection-training-deployment-feedback" data loop will gain a generational advantage in model iteration speed. Once such an advantage is established, it will be extremely difficult for latecomers to catch up.

For this reason, facing the same "data puzzle," different enterprises—guided by their distinct technological DNA—are offering varied solutions, resulting in four mainstream technical routes. Each route makes different trade-offs between "data quality" and "acquisition cost," much like four exploration teams tunneling toward the same "gold mine" from different directions.

The first technical route is teleoperation collection, where human operators remotely control robots to perform specific tasks, recording data such as joint angles, end-effector poses, camera images, and force sensor readings.

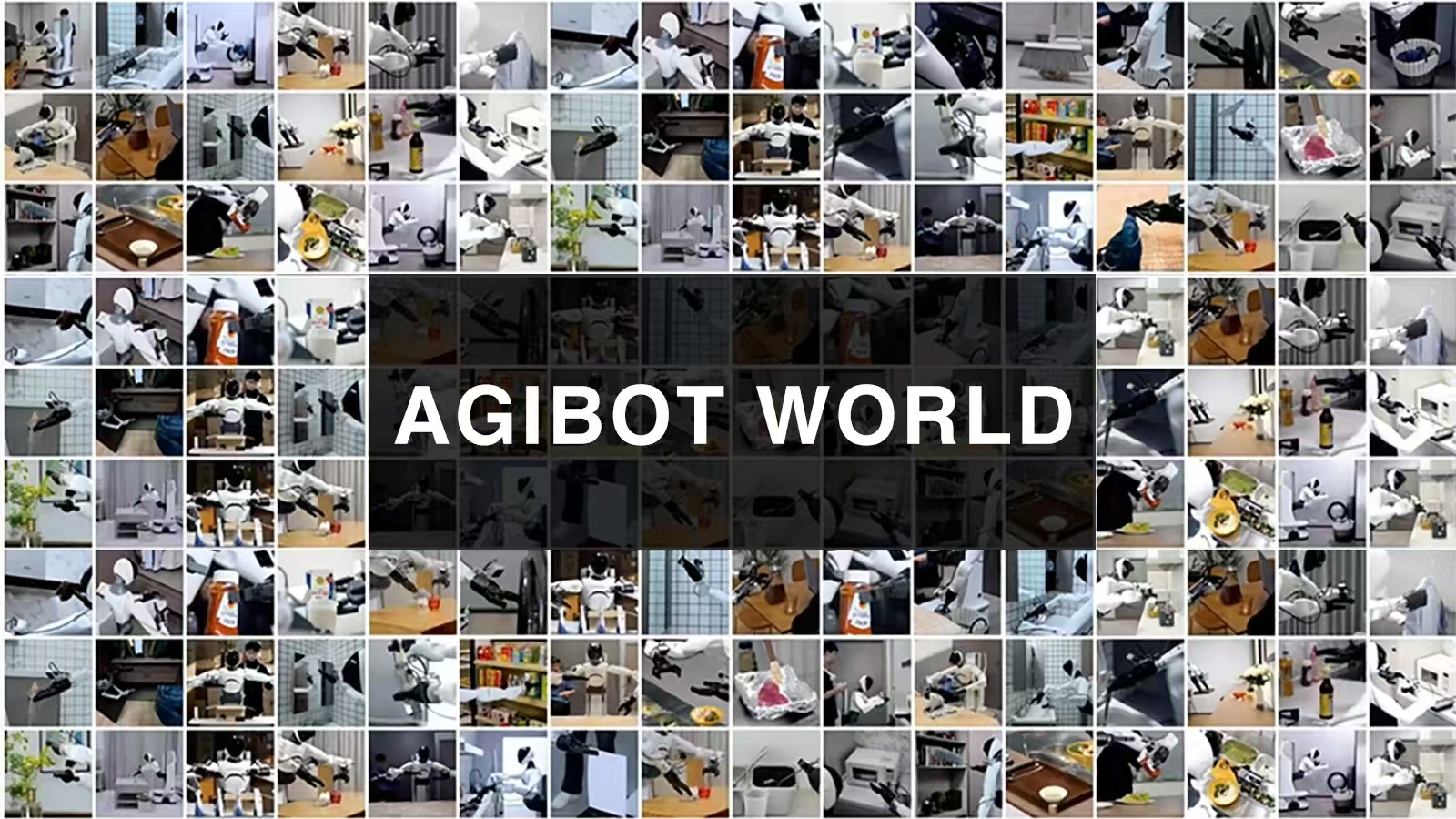

Image Source: AgiBot

AgiBot's data collection factory and application test base in Shanghai is a typical representative of this route. Relying on this base, AgiBot World dataset deeply replicates five core scenarios: home, dining, industrial, retail, and office. It includes hundreds of real-world sub-scenarios and over 3,000 real items, building the necessary conditions for robot R&D and testing to achieve embodied intelligence.

But this is also an extremely "capital-intensive" route, prioritizing high quality at a high cost.

"Teleoperation data collection can provide high-quality, real-world robot operation data, resulting in effective model training," Lyu Jun, a research scientist at Noematrix, said recently at the 4th Embodied Intelligent Robot Industry Development Forum hosted by Gasgoo. However, he also frankly admitted that the limitations of teleoperation are obvious, summarizing them in three main points:

First, the cost is extremely high, as it requires expensive robot bodies and teleoperation equipment;

Second, the operation is difficult. Relevant research shows that about one-third of ordinary subjects fail to complete assigned tasks when using teleoperation equipment for the first time. Even those who succeed generally operate slowly and with a noticeable mechanical feel;

Third, teleoperation has an insurmountable flaw: there is often a huge gap between the diversity of backgrounds and objects in the real world versus the data collection factory. This causes the collected data to deviate significantly from the real world, leading to poor model performance in actual scenarios.

In short, while teleoperation is the "gold standard" for embodied data, its high cost and low efficiency make it difficult to scale up rapidly.

Compared to the "heavy investment" of teleoperation, the second route—simulated synthetic data—attempts to use infinite virtual space to combat the long-tail problems of the real world.

Simulated synthetic data involves using physics simulation engines to generate data on robot-environment interaction in virtual settings. The advantages of this route include lower cost per data point, easier scalability, highly controllable environments, and nearly infinite scenario expansion.

GalaxyBot is a staunch supporter of this route.

Based on a virtual-real fusion training paradigm that prioritizes synthetic simulation data supplemented by real robot data, GalaxyBot has built a 10-billion-scale embodied intelligence dataset. According to the company, this solution enables humanoid robots to generalize to new scenes and objects—drawing inferences from one instance—with few or even zero samples. It claims training efficiency is 1,000 times higher than Tesla's, while models trained on this dataset achieve a 99% success rate.

Guanglun Intelligent, which recently secured 1 billion yuan in financing, has also adopted this route.

Image Source: Guanglun Intelligent

In the view of Xie Chen, CEO of Guanglun Intelligent, the robotics field currently faces a massive data shortage. Unlike large language models, there aren't enough robots in the real world to continuously collect data. Therefore, it is essential to generate sufficient data in simulation environments via human teleoperation to train robot foundation models.

Guanglun Intelligent believes that in the era of physical AI, the simulation world, behavioral data, and evaluation systems are becoming the new technological foundation.

To this end, Guanglun Intelligent has built a complete chain covering physically realistic simulation, large-scale data production, and model capability evaluation, centered on a three-layer architecture of World, Behavior, and Evaluation. In the data segment, Guanglun has constructed a large-scale non-body-specific data engine covering two paths: simulated synthetic data and human video data, which is now being delivered at scale globally.

DexForce goes even further with a bold hypothesis: relying solely on 100% generative simulation data, provided the generation rate breaks through a critical point, robots can emerge in the real world with generalization capabilities surpassing the state-of-the-art (SOTA).

Even so, this cannot completely hide the flaws of simulation: virtual environments are often too idealized and cannot perfectly simulate real physical laws. This causes some models to learn excellent strategies in simulation, only to see performance decay when migrated to physical robots. It's like scoring full marks in a game but failing the real-world exam.

Therefore, the industry generally believes that simulation must ultimately be combined with real robot data to truly solve the "last mile" problem. In GalaxyBot's solution, robots first traverse various extreme scenarios in the virtual world, then use a very small amount of real robot data for final practical polishing.

If simulation synthesis is about building a "training ground" in the virtual world, then the third route—portable collection (UMI)—is like carrying a "data recorder" with you, allowing data collection to better break through scene limitations.

UMI data collection involves handheld lightweight devices equipped with grippers, fisheye cameras, and IMUs to demonstrate operations in real environments. It records key data such as force feedback, image information, and motion trajectories in real-time, decoupling the data for use by different robots.

Compared to teleoperation, which also collects real-world data, UMI portable collection offers lower hardware costs, higher efficiency, and cross-platform reusability, greatly enhancing the reuse value of data.

Image Source: PsiBot

Luming Robotics, Tashi Zhihang, PsiBot, Noematrix, and international players like Sunday Robotics and Generalist are all practitioners of this technical route.

Among them, PsiBot's proprietary embodied native human data collection solution, Psi-SynEngine, can directly capture operational data from frontline workers in real-world settings. It covers logistics, factories, retail, hotels, and homes, requiring no secondary migration.

However, unlike traditional UMI schemes that primarily use grippers, PsiBot's Psi-SynEngine end-effector is paired with a portable exoskeleton tactile glove data collection kit. Even so, the comprehensive cost of this solution has reportedly dropped to about one-tenth that of real-robot teleoperation. Building on this, PsiBot plans to launch a portable crowdsourced version in the future, which could drive costs down even further.

Noematrix's RoboPocket, by leveraging the mature hardware ecosystem of smartphones, enables every ordinary user to become a participant in data collection.

Image Source: Noematrix

This solution utilizes the built-in RGB cameras, depth cameras, and sensors of smartphones to replace traditional, expensive, and bulky professional collection equipment, achieving a paradigm shift from "fixed-point collection" to "anytime, anywhere collection." According to data previously released by Noematrix, RoboPocket successfully signed contracts for hundreds of units in its first month of formal launch and scaled delivery earlier this year.

Subsequently, Noematrix achieved an extreme balance of cost and efficiency by deep cooperation with a leading second-hand electronics platform. It is reported that based on a strict 12-month depreciation calculation, the hardware cost of this solution accounts for only 3.5% of the total data collection cost.

But UMI also has its "Achilles' heel"—data quality governance. Due to a lack of supervision over the collection process, much of the data captured by devices in this route may be unusable for training, requiring rigorous data governance processes.

Lyu Jun admitted candidly that based on the Shanghai data collection situation of the company's devices in the first week of March, calculated on an 8-hour workday, the latest average effective data collection volume per person per day for RoboPocket is about 3 hours.

The fourth route is human video learning, which involves letting robots "learn by watching videos" like humans. This method offers lower costs and makes it easier to acquire real-world scenario data at scale.

A representative enterprise like Tesla spent vast amounts of time and money on real-world data collection in its early stages. Last May, Tesla announced that Optimus would move away from traditional motion capture and teleoperation training, shifting to a "pure vision" AI training mode based on video data to boost collection efficiency and training scale.

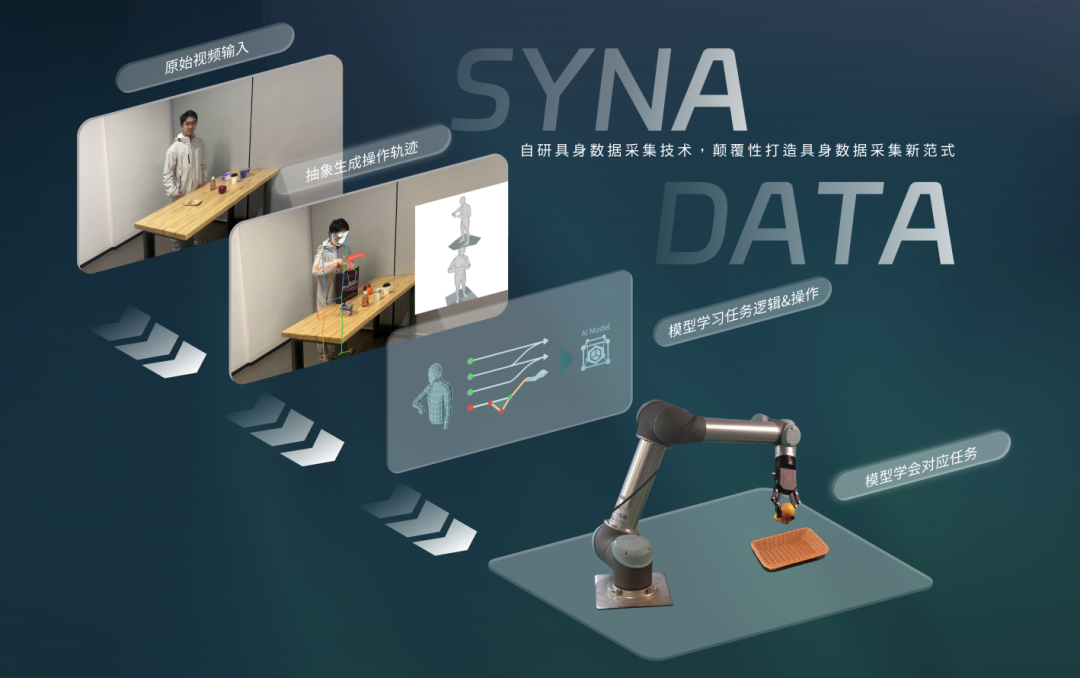

Image Source: Shutu Technology

Shutu Technology' SynaData solution is also a typical representative of this route. This solution pioneered a new path for extracting multimodal training data from internet monocular videos. It claims to have reduced the comprehensive cost of embodied intelligence data collection to 0.5% of the industry average, effectively addressing the long-standing dilemma of data cost versus quality.

In addition, companies like DexForce, LimX Dynamics, and Spirit AI have all adopted video learning methods to varying degrees for embodied intelligence training.

Even so, the drawbacks of video learning cannot be ignored: information density is relatively low, and it lacks key interaction signals like force and touch. It requires powerful post-processing technology to convert video into training data.

Conclusion

From AgiBot's teleoperation factory to GalaxyBot's simulation empire, and from Noematrix's RoboPocket to Shutu Technology' video learning, different data routes—with their respective pros and cons—together form the diverse ecological landscape of the current embodied data field.

Many leading enterprises are even laying out multiple technical routes simultaneously. This strategy of "multi-pronged advancement" confirms a fact: this "gold rush" for data in the embodied intelligence field is far from reaching its final act.

Moving forward, as technology evolves and practices deepen, these schools of thought are expected to further integrate and innovate. We may see the selection of appropriate collection method combinations based on different stages, projects, or budget constraints, or the emergence of entirely new data paradigms.

Ultimately, the deciding factor in this "data war" may not lie in a single breakthrough by any one technical route, but in who can be the first to successfully run the complete closed loop of "collection—training—deployment—feedback."