Gasgoo Munich- As the smart driving landscape of 2026 takes shape, what is the industry really fighting for?

If the playbook is still just about price wars, you've already misread the board. At Hesai's recent Tech Open Day, a new signal emerged: LiDAR is undergoing a fundamental shift from "geometric perception" to "physical imaging."

Sun Kai, co-founder and chief scientist at Hesai, floated a disruptive idea during his keynote: "LiDAR with camera-level imaging is becoming reality."

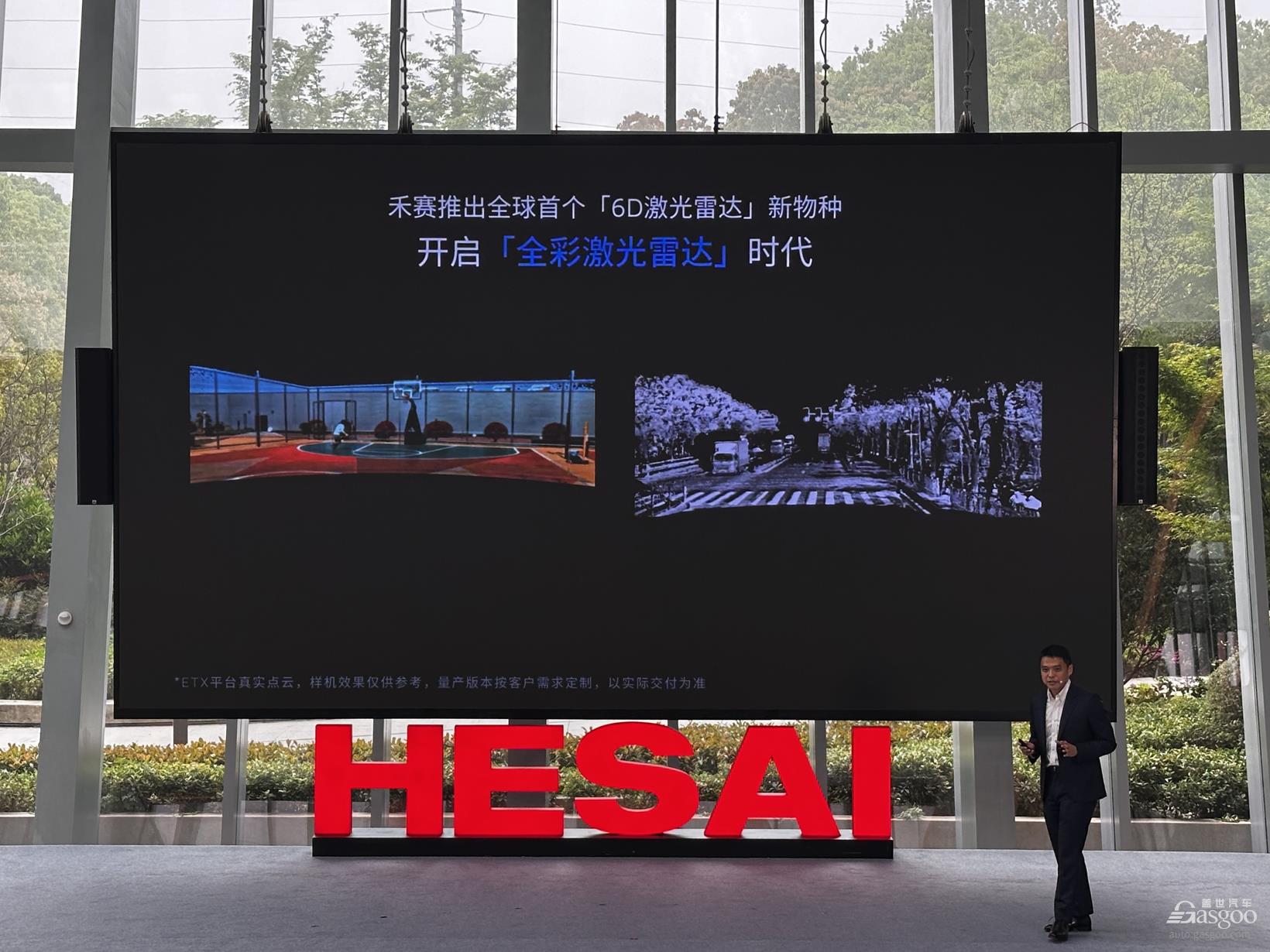

Behind that statement lies the global debut of Hesai's "PICASSO" chip platform—and the ETX, the world's first 6D full-color LiDAR built on it.

Why equip LiDAR with "camera pixels"?

"The world is inherently three-dimensional, but due to technical limitations, we've had to record it with 2D cameras," Sun Kai said. That observation highlights the biggest pain point in autonomous driving perception: the loss of information.

The industry has long been split between the "LiDAR camp" and the "pure vision camp." LiDAR excels at precise ranging and building 3D skeletons, but it lacks color and texture, making it blind to semantics like traffic lights. Cameras capture color and detail but lack depth—and they're heavily affected by lighting conditions.

The result has been a "Frankenstein" solution: physically stitching LiDAR and cameras together.

But this approach has a fatal flaw: spatiotemporal asynchrony.

Spatially: With two separate hardware units, pixels cannot be perfectly aligned. A LiDAR point might hit a road sign, while parallax could cause the camera pixel to capture the empty air behind it.

Temporally: Two independent shutters mean sampling frequencies aren't synced. For high-speed objects, that tiny time lag can cause perception errors.

So why add camera pixels to LiDAR? The answer is fusion.

When the photosensitive element shifts from traditional image sensors to SPADs (Single Photon Avalanche Diodes), things change. The deep fusion of SPAD Image Sensors and SPAD Depth Sensors allows every pixel to measure distance (XYZ) and perceive color (RGB).

This is what Hesai defines as "6D"—3D spatial structure plus 3D color information.

Imagine a pitch-black night. Traditional cameras might be blinded by noise, while standard LiDAR can sketch outlines but can't distinguish a deep pothole from the road surface. With the "hypersensitive" capabilities of the PICASSO chip, LiDAR can now image as clearly as day at night—and with precise depth data built in.

It's a paradigm shift. LiDAR is no longer just a "rangefinder"; it has become an "all-weather 3D camera." It no longer relies on external cameras to fill in color data—it can describe the world entirely on its own.

As Sun Kai noted, this isn't just a technical breakthrough; it's groundwork for the immersive experiences that will come with 3D headsets in the next decade. When the next generation is born, we won't record their growth with 2D video, but with 6D full-color point clouds containing real color and depth.

Why is Hesai doing this now?

If the first part answers the question of technical necessity, the second part explores the strategic logic.

The answer likely lies in the ETX series and Hesai's Kosmo business.

Powered by the "PICASSO" chip, Hesai launched the ETX platform, supporting up to 4,320 lines of full-color 4K ultra-HD perception, with a maximum detection range of 600 meters and the ability to identify 15-by-25-centimeter wooden blocks within 150 meters.

Mass production is set for the second half of this year.

To put that in perspective, Sun Kai offered a comparison: a 500-line radar typically corresponds to a 300-meter range. The fact that ETX reaches 600 meters means vehicles have more time to react at highway speeds. The key isn't just ultra-high line counts—it's achieving full-color imaging while maintaining them.

This implies that in L3 or even L4 autonomous driving architectures, LiDAR is no longer just a "backup" for cameras but a core decision-making sensor. When the system needs to brake or evade in extreme scenarios—bright light, heavy rain, total darkness—only a sensor with both XYZ and RGB data can provide reliable redundancy.

Another product is even more intriguing: if ETX solves for today's cars, Kosmo is solving for tomorrow's robots.

Image Source: Hesai

At the open day, Li Yifan revealed a critical strategic pivot: "Once LiDAR technology reaches a point where it's 'good enough,' why not use it to solve other problems?"

Kosmo was born from exactly this kind of high-fidelity 3D recording capability. It isn't just a hardware product; it's a data acquisition tool.

Li Yifan was candid when asked about "world models": "We didn't build this because 'world models' are trending. We discovered during development that this method of recording 3D spatial data with extreme fidelity is exactly what training world models requires."

Regardless of how AI large models evolve, or whether "world models" become mainstream, hardware that can perceive the physical world with the greatest precision will always be a core asset. Through Kosmo, Hesai is pushing LiDAR's boundaries from cars to embodied intelligence robots—expanding from simple navigation and obstacle avoidance to semantic understanding and reconstruction of the physical world.

Where is the finish line for LiDAR?

At this point, a question arises: if ETX can hit 4,320 lines, does that mean LiDAR will endlessly "stack" line counts in the future?

The answer is no.

"A 500-line LiDAR with 300-meter ranging capability is a reasonable metric," Sun Kai said. That highlights a key concept: the marginal utility of line counts. Resolution (lines) and range must be matched.

High lines, low range: If you have 2,000 lines but can only see 100 meters, those high lines are useless beyond that distance—you can't even hit distant objects. High line count becomes just a meaningless number.

High range, low lines: If you can see 600 meters but have only 16 lines, distant objects are just blurry outlines. You can't identify small obstacles like tires or low barriers.

Sun Kai's view is direct: line count isn't the only metric, nor is it the most important one.

The so-called "thousand-line" or "ten-thousand-line" race is essentially about seeing small objects far away. If ranging capability can't keep up, simply stacking lines is a "false demand." For Hesai, the real priority is full-element perception and "invisible" safety.

So, where is the finish line for LiDAR?

Combining insights from Li Yifan and Sun Kai, the endpoint likely has three dimensions:

Dimension One: The Performance "Sweet Spot."

As Sun Kai noted, lines and distance must be proportional. Future LiDAR won't infinitely stack lines; it will seek a "good enough and safe" balance. For forward-facing main radars, that balance might be 1,000 to 2,000 lines paired with 300 to 500 meters of range. For fill-in radars (FTX), it's an ultra-wide field of view (180°) paired with high frame rates at short-to-medium distances.

Dimension Two: From "Sensor" to "Airbag."

Li Yifan noted that LiDAR is "redundancy" at the L2+ stage, but "multi-system decision-making" at L3/L4. Its positioning will evolve from "auxiliary perception" to "invisible airbag." It may stay silent normally, but in extreme scenarios where cameras fail—backlighting, night, storms—it must be decisive and ensure the car stops. That "reliability in extreme scenarios" is the ultimate measure of a LiDAR's value.

Dimension Three: Extreme Volume Compression and Ubiquity.

Sun Kai painted a vivid future in his speech: as chip technology like "PICASSO" matures, LiDAR units could shrink to the "size of a thumb."

That means LiDAR will break the boundaries of the automobile, entering phones, glasses, and smart homes. When it becomes as ubiquitous as cameras, it will complete its final evolution: from an "expensive autonomous driving kit" to an "extension of human vision."

Industry Upheaval and "The Darkness Before Dawn"

Finally, we have to pull our gaze back from technology to the harsh realities of business.

As Horizon Robotics CEO Yu Kai said during a roundtable, the industry is in the "darkness before dawn."

While Hesai has posted an enviable scorecard—3.03 billion yuan in full-year 2025 revenue, the first global LiDAR maker to achieve a full-year GAAP profit, and top market share for 13 consecutive months—the entire sector is still enduring a brutal "price war."

Why build a "premium" high-performance product like ETX?

Li Yifan's answer was sharp. He used the analogy of "steak and steak sauce." Without a great chef, you don't need top-tier steak; without top-tier steak, even a great chef can't cook. The inflection point for smart driving is the moment hardware and algorithms finally meet in the middle.

Today, consumers buy smart driving features that are "good enough." But when L3 and L4 truly arrive, and users are willing to pay for an intelligent experience that feels like a "soul mate," they will demand "seamlessness" and "extreme safety."

At that point, only LiDAR with 6D full-color, ultra-long range, and high reliability can support that "silky smooth" driving experience.

Back to the original question: Where is the finish line for LiDAR?

Image Source: Hesai

Hesai's answer: it's not in a specific line count, nor a specific detection distance. It lies in becoming the most faithful, clearest bridge between the physical world and the digital one.

When LiDAR gains the ability to perceive color and depth like a human, the "eyes" of autonomous driving will finally be fully open.